Biography

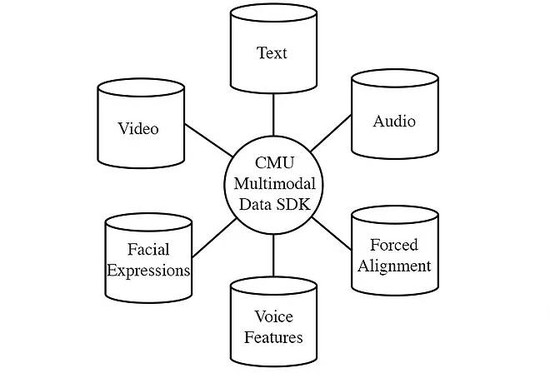

I am an applied scientist at Microsoft, Project Turing, working on large-scale pretraining of language representation and generation models. Before joining Microsoft, I am a research associate in the Language Technologies Institute at Carnegie Mellon University, advised by Professor Louis-Philippe Morency. My previous research revolves around deep learning, multimodal machine learning and natural language processing. I obtained my M.Sc in Intelligent Information Systems degree from CMU and B.Sc in Applied Mathematics degree from Wuhan University.

Interests

- Deep Learning

- Representation Learning

- Natural Language Processing

- Multimodal Machine Learning

Education

-

M.Sc in Intelligent Information Systems, 2017 - 2018

Carnegie Mellon University

-

B.Sc in Applied Mathematics, 2013 - 2017

Wuhan University